Editorial illustration for Nvidia Unveils Vera Rubin Chips, Targets Groq's Language Processing Strengths

Nvidia's $20B Groq Bet: LPU Chips Redefine AI Processing

Nvidia's USD 20B Groq bet focuses on LPU, SRAM as it launches Vera Rubin family

The AI chip wars are heating up, and Nvidia is making a bold move. The company's latest announcement targets a critical weakness in its current architecture: language processing performance.

Nvidia's new Vera Rubin chip family represents a strategic pivot into territory previously dominated by specialized competitors. By designing chips explicitly focused on complex computational challenges, the company is signaling its intent to close performance gaps in emerging AI technologies.

The investment is substantial - reportedly around $20 billion - suggesting Nvidia sees language processing as more than a side project. Specific architectural ideas hint at a direct challenge to current market leaders, particularly companies like Groq that have carved out niches in specialized processing.

What makes these new chips potentially game-changing? The answer lies in how Nvidia is reimagining chip design to handle increasingly complex computational splits. And that's where things get interesting.

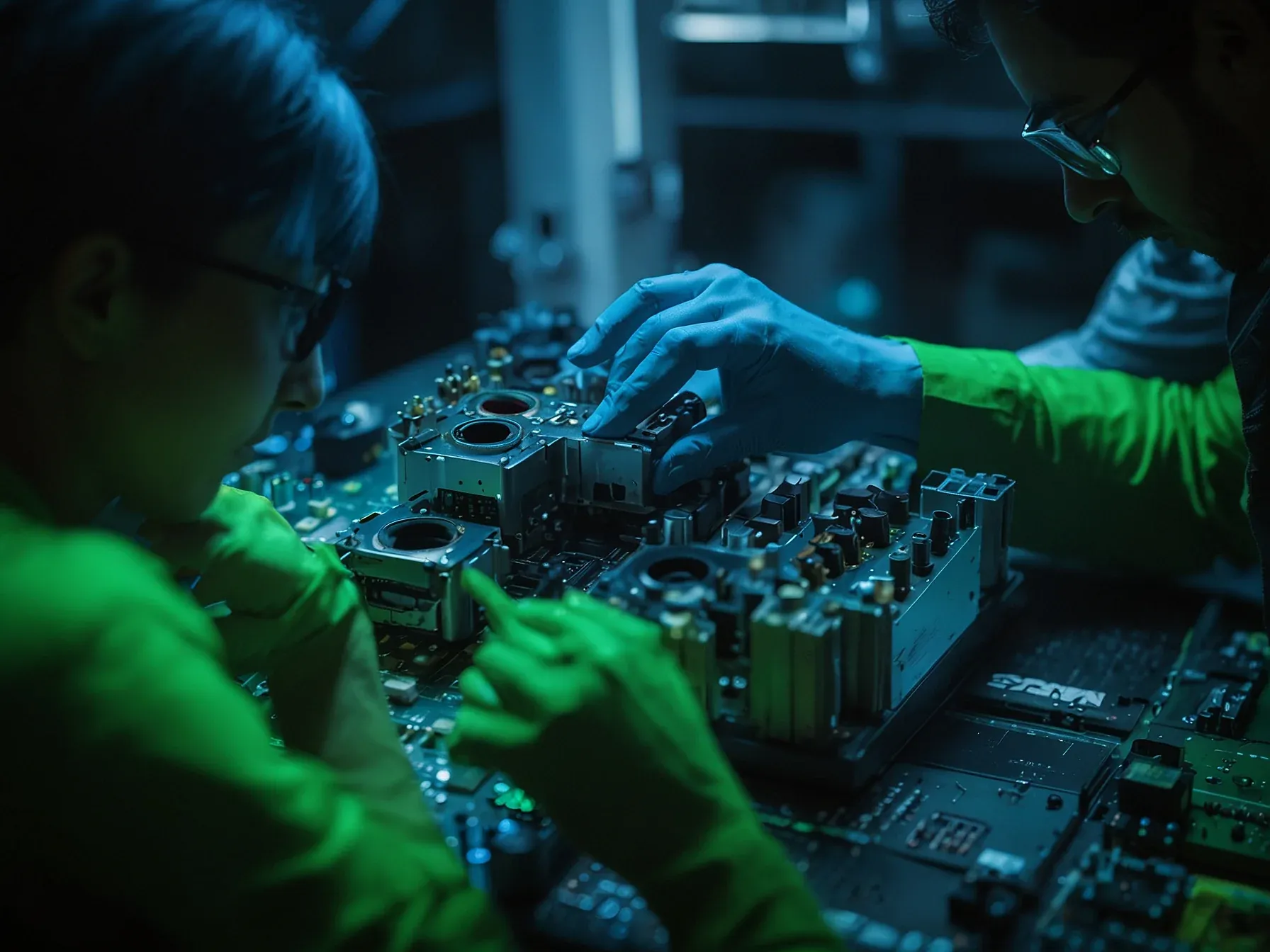

(This is where Nvidia was weak, and where Groq's special language processing unit (LPU) and its related SRAM memory, shines. More on that in a bit.) Nvidia has announced an upcoming Vera Rubin family of chips that it's architecting specifically to handle this split. The Rubin CPX component of this family is the designated "prefill" workhorse, optimized for massive context windows of 1 million tokens or more.

To handle this scale affordably, it moves away from the eye-watering expense of high bandwidth memory (HBM) -- Nvidia's current gold-standard memory that sits right next to the GPU die -- and instead utilizes 128GB of a new kind of memory, GDDR7. While HBM provides extreme speed (though not as quick as Groq's static random-access memory (SRAM)), its supply on GPUs is limited and its cost is a barrier to scale; GDDR7 provides a more cost-effective way to ingest massive datasets. Meanwhile, the "Groq-flavored" silicon, which Nvidia is integrating into its inference roadmap, will serve as the high-speed "decode" engine.

This is about neutralizing a threat from alternative architectures like Google's TPUs and maintaining the dominance of CUDA, Nvidia's software ecosystem that has served as its primary moat for over a decade. All of this was enough for Baker, the Groq investor, to predict that Nvidia's move to license Groq will cause all other specialized AI chips to be canceled -- that is, outside of Google's TPU, Tesla's AI5, and AWS's Trainium.

Nvidia's strategic move into language processing chips reveals a calculated response to emerging market challenges. The Vera Rubin chip family, particularly the CPX component, signals a direct challenge to Groq's language processing unit (LPU) strengths.

By targeting massive context windows of 1 million tokens, Nvidia is addressing a critical performance bottleneck in AI computing. The USD 20B investment suggests the company sees significant potential in specialized language processing architecture.

The Rubin CPX's design appears laser-focused on solving computational efficiency problems, especially around prefill workloads. This suggests Nvidia recognizes the limitations in its previous chip generations and is actively adapting.

While details remain sparse, the announcement hints at a sophisticated approach to handling complex AI workloads. Nvidia seems intent on competing directly with specialized chip makers by developing purpose-built solutions.

Still, questions linger about real-world performance and cost-effectiveness. But one thing's clear: the language processing chip race is heating up, with Nvidia making a bold, strategic bet on next-generation computing capabilities.

Further Reading

- GPU vs. ASIC: Nvidia buys Groq for $20B - Aragon Research

- Nvidia's $20 billion Groq play is a blueprint for 2026 - TheStreet

- $20 Billion Record: Nvidia Snaps Up Groq to Rule AI - HeyGoTrade

- Groq and Nvidia Enter Non-Exclusive Inference Technology Licensing Agreement - Groq Official Newsroom

- Here's why Nvidia is dropping $20B on Groq's AI tech - Fierce Network

Common Questions Answered

What specific challenge is Nvidia addressing with the Vera Rubin chip family?

Nvidia is targeting language processing performance by developing specialized chips that can handle massive context windows of up to 1 million tokens. The Rubin CPX component is specifically designed to be a 'prefill' workhorse, addressing previous architectural limitations in AI chip design.

How does the Vera Rubin chip compete with Groq's Language Processing Unit (LPU)?

The Vera Rubin chip family represents Nvidia's direct strategic response to Groq's language processing strengths, particularly by focusing on specialized computational challenges. By developing the CPX component with optimizations for large context windows, Nvidia is attempting to close the performance gap in language processing technologies.

What makes the Rubin CPX component unique in AI chip design?

The Rubin CPX is specifically architected as a 'prefill' workhorse capable of handling massive context windows of 1 million tokens or more. This approach aims to address previous cost and performance limitations by moving away from expensive traditional computing architectures.