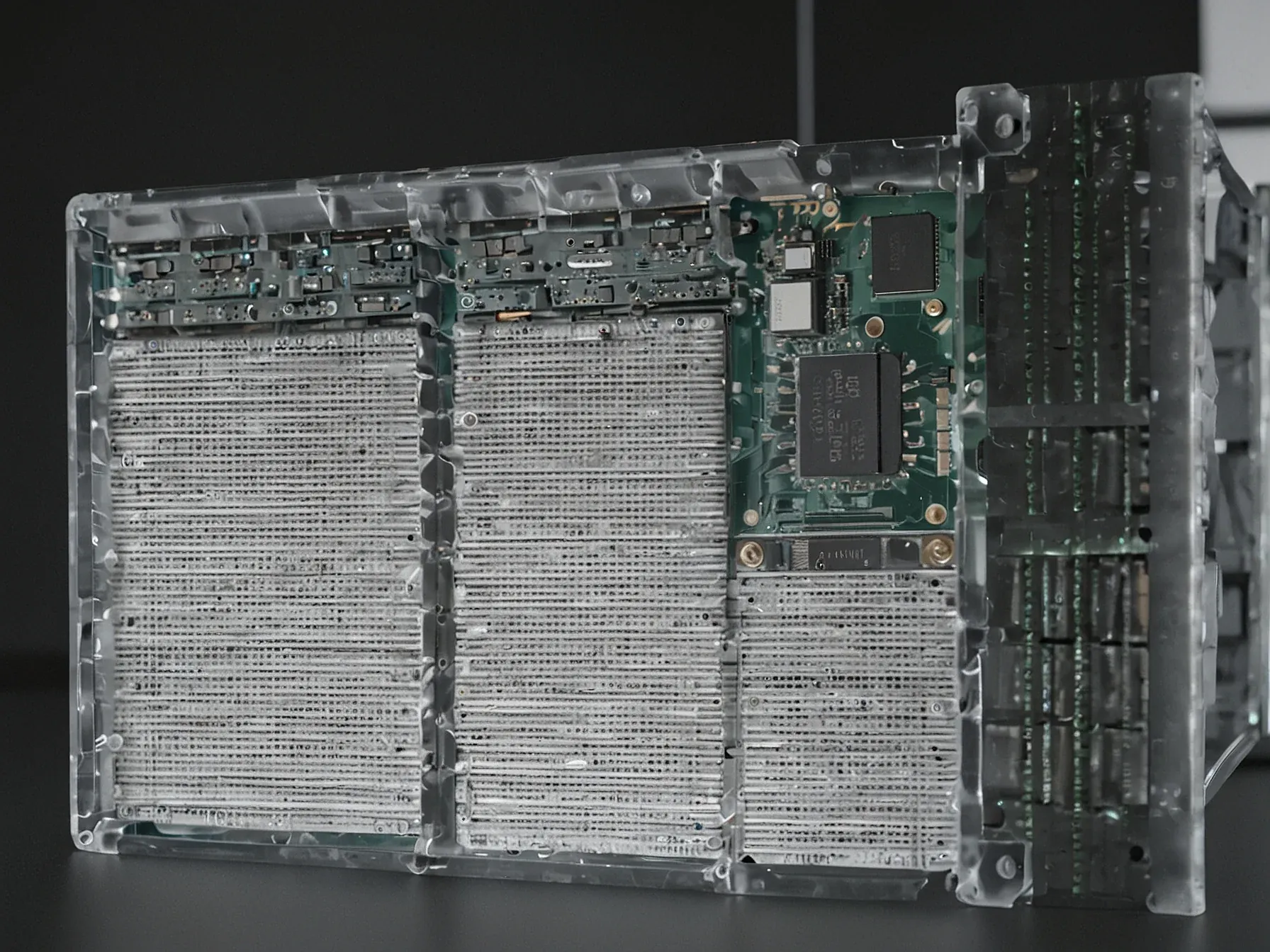

Editorial illustration for New GPT‑5.4 and Claude Opus 4.6 excel in coding, math, research

GPT-5.4 and Claude Opus Redefine AI Coding Limits

New GPT‑5.4 and Claude Opus 4.6 excel in coding, math, research

Why does the split between “hard‑core” and “hand‑hold” AI matter right now? One camp is busy feeding the newest language models into tools that developers already trust—think OpenAI’s GPT‑5.4 Thinking or Anthropic’s Claude Opus 4.6 paired with Codex or Claude Code. The other camp still leans on older versions for everyday chat.

The contrast is stark: the same family of models that can now draft research papers or solve differential equations still stumble over simple, off‑the‑cuff questions. Andrej Karpathy notes that the leap in programming, math and scholarly assistance has been “massive this year,” hinting at a shift in what professionals can automate. Yet the gap raises a practical question for anyone watching the field: can the tools that excel in technical work be trusted for the casual queries that keep users coming back?

The answer, according to recent observations, sits somewhere between the two extremes.

The second group uses the latest models--like OpenAI's GPT-5.4 Thinking or Claude Opus 4.6--inside capable harnesses like Codex or Claude Code for professional work in programming, math, and research. Progress in these areas has been massive this year, Karpathy says, with models now capable of autonomously restructuring entire codebases or hunting down security vulnerabilities on their own. Karpathy says these two groups are basically talking past each other.

It really is simultaneously the case that OpenAI's free and I think slightly orphaned (?) "Advanced Voice Mode" will fumble the dumbest questions in your Instagram's reels and *at the same time*, OpenAI's highest-tier and paid Codex model will go off for 1 hour to coherently restructure an entire code base, or find and exploit vulnerabilities in computer systems. Karpathy via X Karpathy's take points to something bigger: areas like code or math, where you can clearly check whether an answer is right or wrong and specifically reinforce it through reinforcement learning with verifiable rewards, are seeing more and especially measurable gains from AI progress than fuzzy domains like writing or consulting, where there's no clean metric to optimize against. Why verifiability drives AI progress This raises a core question in AI research right now: can general intelligence actually emerge from language models, or can these models only be tuned to perform well within specific domains?

Do the newest models finally deliver on their promises? Karpathy says they excel where it counts: programming, mathematics, and research tasks. GPT‑5.4 Thinking and Claude Opus 4.6 can churn out complex code in hours, a speed that professional users find valuable.

Yet the same systems stumble on everyday, casual queries, producing errors that casual users notice. This split performance explains why the free‑tier ChatGPT experience often leaves a different impression than the one formed by developers using Codex or Claude Code. The contrast is not a paradox, according to Karpathy, but a reflection of how the models are tuned for specific, high‑stakes workloads.

Massive progress this year has made autonomous code generation feasible, though the article stops short of detailing limits. Unclear whether future updates will close the gap in general‑purpose conversation without sacrificing specialized strength. For now, the evidence points to a tool that shines in technical domains while remaining fragile in simple dialogue.

Further Reading

- Papers with Code - Latest NLP Research - Papers with Code

- Hugging Face Daily Papers - Hugging Face

- ArXiv CS.CL (Computation and Language) - ArXiv

Common Questions Answered

How are GPT-5.4 Thinking and Claude Opus 4.6 transforming professional coding and research tasks?

These latest AI models can autonomously restructure entire codebases and independently hunt down security vulnerabilities, dramatically accelerating professional development workflows. According to Karpathy, the progress in programming, mathematics, and research capabilities has been massive this year, with models now capable of complex technical tasks in hours.

Why do GPT-5.4 Thinking and Claude Opus 4.6 perform differently in professional versus casual contexts?

While these models excel in specialized domains like programming, mathematics, and research, they often struggle with simple, off-the-cuff conversational queries. This performance split creates a stark contrast between the experiences of professional users who see remarkable technical capabilities and casual users who might encounter more inconsistent interactions.

What makes the latest AI models like GPT-5.4 and Claude Opus 4.6 significant for professional development?

These advanced models can draft complex research papers, solve differential equations, and generate sophisticated code with remarkable speed and accuracy. Professional developers find immense value in their ability to complete technical tasks in hours that would traditionally take much longer.