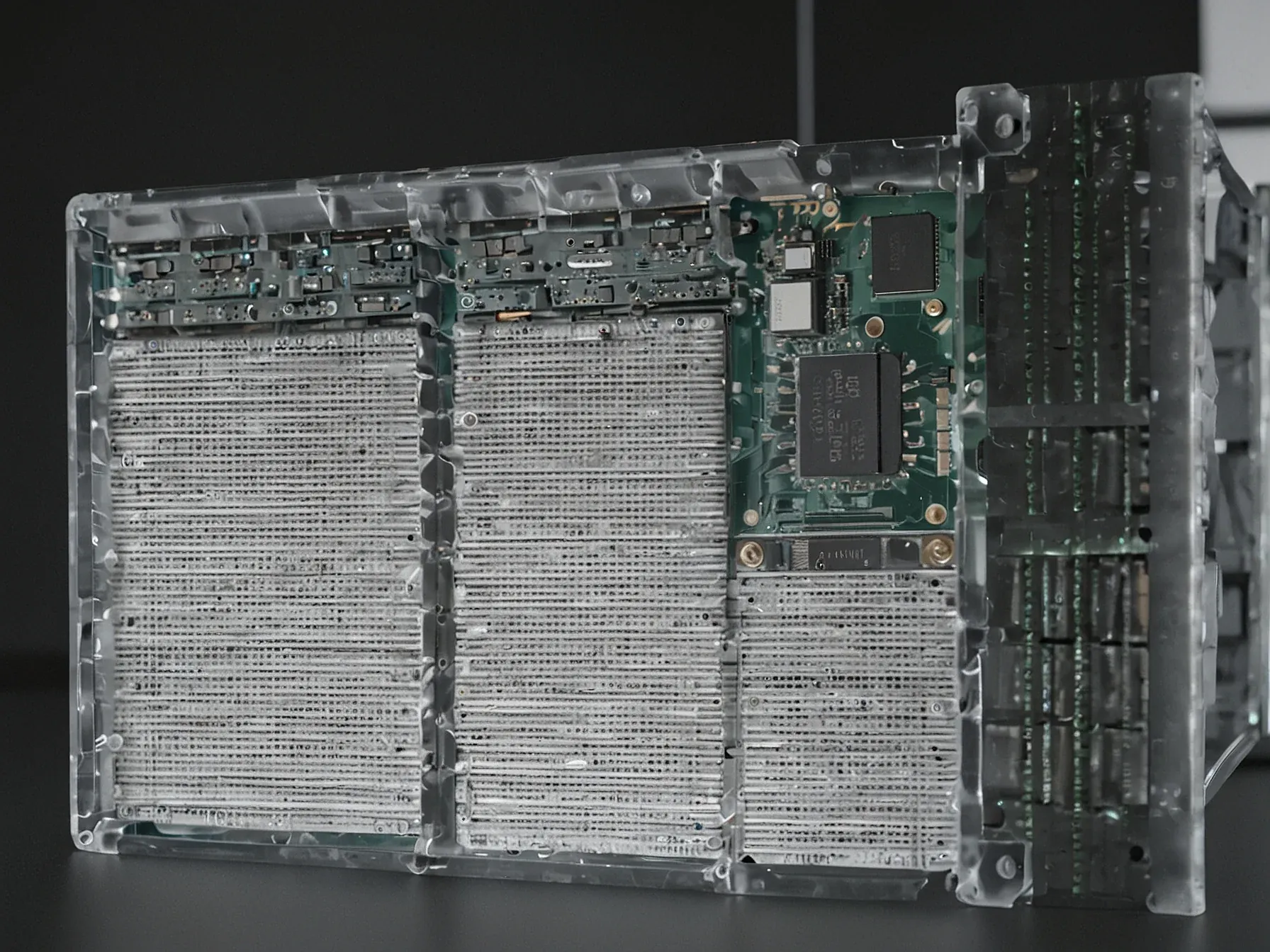

Editorial illustration for Claude and OpenClaw use guardrails to keep AI agents accountable and transparent

AI Agents Claude and OpenClaw Set New Accountability Rules

Claude and OpenClaw use guardrails to keep AI agents accountable and transparent

Claude and OpenClaw have entered the conversation about autonomous AI agents at a moment when the technology feels both inevitable and unsettled. The original headline hinted at “the new reality” and “chaos,” suggesting that developers are racing ahead while regulators and users scramble to keep pace. In the LLMs & Generative AI space, the excitement over agents that can act on their own is matched by a growing unease about what happens when they stray from intended tasks.

While the promise is clear—agents that can execute complex workflows without constant supervision—the risk of unpredictable behavior looms large. That's why engineers are turning to explicit guardrails: constraints that limit scope, require human checks, and record every step. The goal isn’t to stifle capability but to ensure that each decision can be traced and verified.

In short, the conversation is shifting from “what can these agents do?” to “how do we make sure they do it responsibly.”

With the right guardrails in place, agents can focus on specific actions and avoid making random, unaccounted-for decisions. Principles of responsible AI -- accountability, transparency, reproducibility, security, privacy -- are extremely important. Logging agent steps and human confirmation are abs

With the right guardrails in place, agents can focus on specific actions and avoid making random, unaccounted-for decisions. Principles of responsible AI -- accountability, transparency, reproducibility, security, privacy -- are extremely important. Logging agent steps and human confirmation are absolutely critical.

Also, when agents deal with so many diverse systems, it's important they speak the same language. Ontology becomes very important so that events can be tracked, monitored, and accounted for. A shared domain-specific ontology can define a "code of conduct." These ethics can help control the chaos.

When tied together with a shared trust and distributed identity framework, we can build systems that enable agents to do truly useful work. When done right, an agentic ecosystem can greatly offload the human "cognitive load" and enable our workforce to perform high-value tasks. Humans will benefit when agents handle the mundane.

Claude and OpenClaw illustrate how autonomous agents are moving from curiosity to practical tools. Yet the shift has sparked debate about job security and the broader implications of machine agency. With the right guardrails in place, agents can focus on specific actions and avoid making random, unaccounted‑for decisions, the article notes.

Guardrails matter indeed. Principles of responsible AI—accountability, transparency, reproducibility, security, privacy—are highlighted as essential, and logging each step plus requiring human confirmation is presented as a baseline safeguard. However, the comparison reveals that both systems still rely heavily on human oversight to remain trustworthy.

Are the current safeguards sufficient for complex, real‑world deployments? The piece stops short of proving that the guardrails fully eliminate risk, leaving open the question of how reproducibility and privacy will hold up under scale. Unclear whether these measures will prevent the chaos some observers fear as agentic AI expands.

For now, the tools demonstrate promise, but their long‑term impact remains uncertain.

Further Reading

- TCAI Guide: The risks of AI agents built with OpenClaw and other frameworks - Transparency Coalition AI

- OpenClaw lesson: AI agents are a black hole - ReversingLabs

- OpenClaw AI: The Personal AI Agent That Actually Does Things - CTCD

Common Questions Answered

How do Claude and OpenClaw ensure responsible AI agent behavior?

Claude and OpenClaw implement guardrails that focus on keeping AI agents accountable and transparent by establishing clear principles of responsible AI. These guardrails include logging agent steps, requiring human confirmation, and ensuring agents can track and monitor events across diverse systems.

What are the key principles of responsible AI mentioned in the article?

The article highlights five critical principles of responsible AI: accountability, transparency, reproducibility, security, and privacy. These principles are essential for ensuring that autonomous AI agents operate within defined boundaries and can be reliably tracked and understood.

Why is ontology important for autonomous AI agents?

Ontology is crucial for autonomous AI agents because it enables them to speak a common language and track events across different systems. By establishing a standardized framework, agents can more effectively monitor and log their actions, enhancing transparency and accountability.