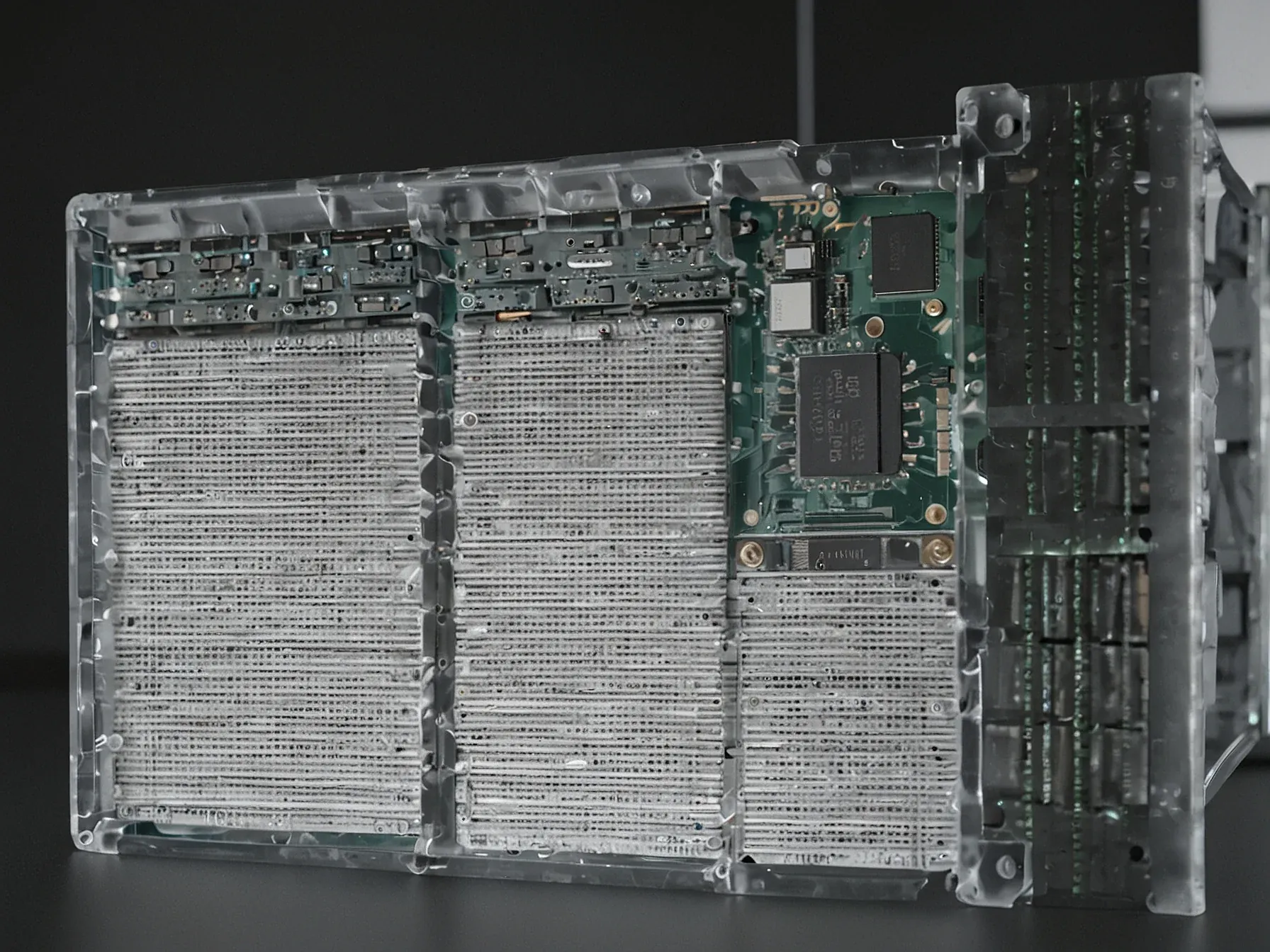

Editorial illustration for Anthropic researcher Nicholas Carlini reports surge of bugs in Project Glasswing

Anthropic's Project Glasswing: Major Safety Bug Revelations

Anthropic researcher Nicholas Carlini reports surge of bugs in Project Glasswing

Why does a flurry of bugs matter for a model that Anthropic has already labeled “too dangerous to release”? While the tech community has been watching Project Glasswing’s development from a cautious distance, the internal safety audits are now generating a different kind of headline. Nicholas Carlini, a security researcher at Anthropic, has been probing the model’s code and behavior for months, but the past fortnight has produced an unexpected spike in findings.

He says the recent tally dwarfs everything he’s recorded before, a statement that hints at deeper flaws than the usual edge‑case glitches. The surge is enough to make even seasoned developers pause. Simon Willison, a well‑known commentator on AI systems, summed up the tension in a single line: “Saying ‘our model is too dangerous to …” The unfinished thought underscores a growing unease that extends beyond marketing hype and into the realm of concrete risk.

What Carlini uncovered, and why it matters, becomes clear in his own words.

Nicholas Carlini, a security researcher at Anthropic, said in a video about Project Glasswing: "I've found more bugs in the last couple of weeks than I found in the rest of my life combined." Simon Willison, a respected developer and commentator, summed it up: "Saying 'our model is too dangerous to release' is a great way to build buzz around a new model," Willison writes, "but in this case I expect their caution is warranted." He would, however, also like to see OpenAI involved, noting that its GPT-5.4 already has a strong reputation for finding security vulnerabilities.

Is Anthropic repeating OpenAI's earlier caution? The company now labels its Claude Mythos Preview as too dangerous to release, citing thousands of OS and browser vulnerabilities uncovered by an AI that few humans could audit. Carlini's own words underscore the scale: “I’ve found more bugs in the last couple of weeks than I found in the rest of my life combined.” That claim suggests a rapid escalation in defect discovery, yet the article provides no detail on the severity of those bugs.

Simon Willison’s brief remark hints at skepticism, but the full context is missing. Without independent verification, it’s unclear whether the reported flaws translate into real‑world risk or remain theoretical. The comparison to GPT‑2’s controversial rollout adds a historical echo, though the industry’s reaction this time is not documented.

If an AI can generate more vulnerabilities than a human researcher, the burden on reviewers could become overwhelming. Whether Anthropic will withhold Claude Mythos or adjust its deployment strategy remains uncertain, and further evidence is needed to assess the true impact of these findings.

Further Reading

- Claude Mythos Preview: Assessing Claude Mythos Preview's cybersecurity capabilities - Anthropic

- Anthropic researcher shows how AI may soon be able to hack apps and devices by itself - Inshorts

- Project Glasswing: An initiative to secure the world's software - Anthropic (YouTube)

- AI Finds Vulns You Can't With Nicholas Carlini - Security Cryptography Whatever

- Project Glasswing: Securing critical software for the AI era - Hacker News

Common Questions Answered

What specific concerns has Nicholas Carlini raised about Project Glasswing?

Nicholas Carlini has reported an unprecedented surge of bugs in Project Glasswing, claiming he has discovered more bugs in the last couple of weeks than in the rest of his life combined. His findings suggest significant potential vulnerabilities in the AI model that Anthropic has already labeled as too dangerous to release.

Why does Anthropic consider Project Glasswing too dangerous to release?

While the article does not provide explicit details about the specific dangers, Anthropic has labeled the model as too risky due to the numerous bugs and vulnerabilities discovered during internal safety audits. The sheer volume of bugs found by Carlini indicates potential systemic issues that could pose significant risks if the model were to be deployed.

How has the tech community responded to Anthropic's cautious approach with Project Glasswing?

Simon Willison, a respected developer, suggests that while Anthropic's claim of the model being too dangerous might build buzz, he believes their caution is warranted. The tech community appears to be watching the development of Project Glasswing from a cautious distance, recognizing the potential risks associated with advanced AI models.