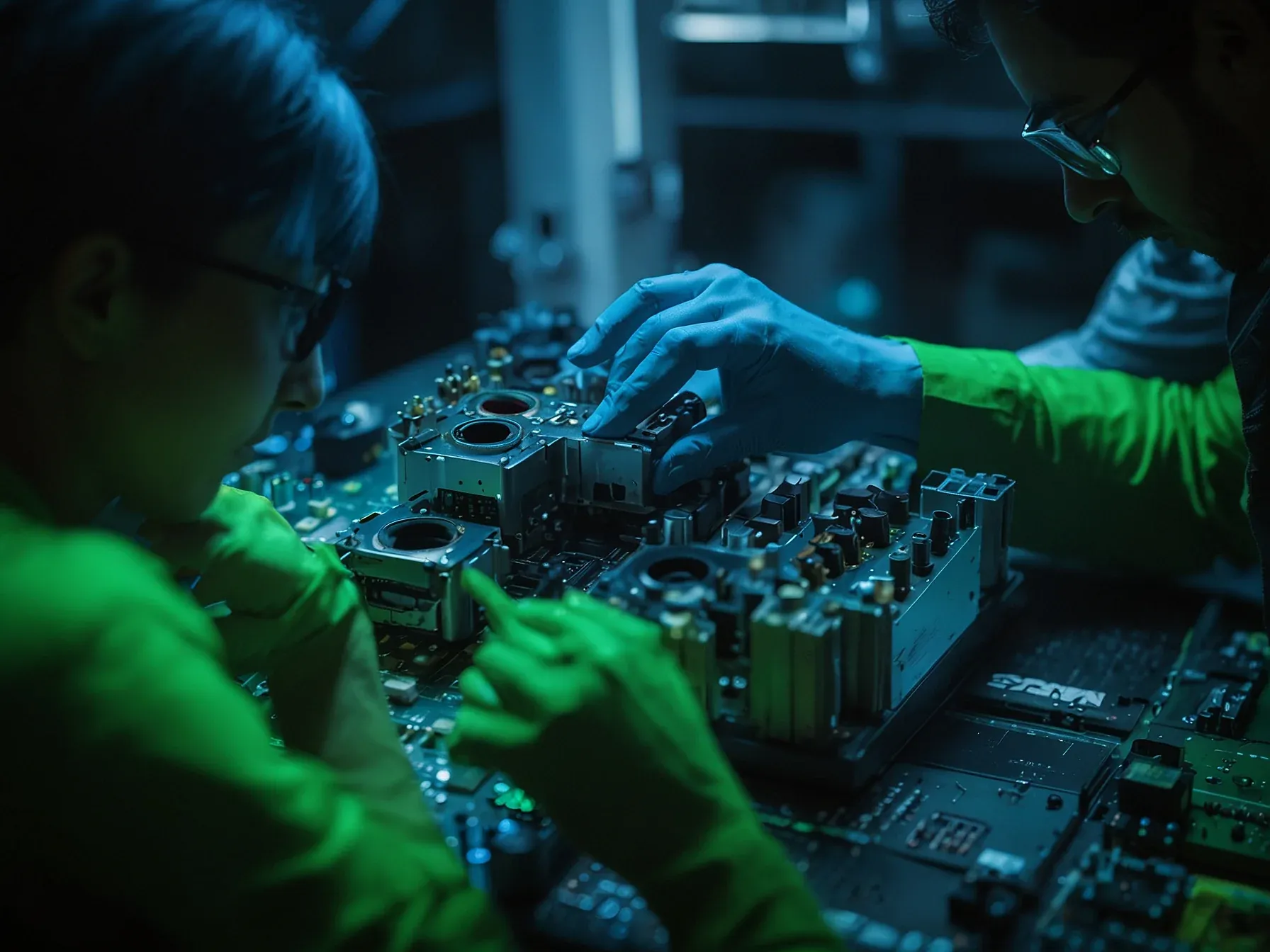

Editorial illustration for NVIDIA's BlueField‑4 CMX platform uses Dynamo to manage G1 GPU HBM context

NVIDIA BlueField-4: Next-Gen GPU Memory Management

NVIDIA's BlueField‑4 CMX platform uses Dynamo to manage G1 GPU HBM context

In today’s high‑throughput generative models, the speed at which a system can fetch and update key‑value pairs often decides whether a prompt returns in seconds or minutes. NVIDIA’s latest BlueField‑4‑powered CMX platform tackles that bottleneck by splitting memory into three distinct layers, each tuned for a different access pattern. The hottest data lives in GPU HBM, where nanosecond latency is essential for active token generation.

A middle tier of system RAM provides a buffer, letting the accelerator off‑load overflow without stalling. Finally, a local SSD tier holds warm data that can be pulled back into faster storage when demand spikes again. This hierarchy isn’t just a hardware curiosity; it requires precise coordination to avoid costly shuffles and to keep the pipeline humming.

Orchestration tools are the glue that bind these tiers together, ensuring that the right piece of context sits in the right place at the right time.

AI infrastructure teams use orchestration frameworks, such as NVIDIA Dynamo, to help manage this context across these storage tiers: - G1 (GPU HBM) for hot, latency‑critical KV used in active generation - G2 (system RAM) for staging and buffering KV off HBM - G3 (local SSDs) for warm KV that is reus

AI infrastructure teams use orchestration frameworks, such as NVIDIA Dynamo, to help manage this context across these storage tiers: - G1 (GPU HBM) for hot, latency‑critical KV used in active generation - G2 (system RAM) for staging and buffering KV off HBM - G3 (local SSDs) for warm KV that is reused over shorter timescales; because G3 is tied to a single node, it's harder to manage and maintain and doesn't scale easily - G4 (shared storage) for cold artifacts, history, and results that must be durable but are not on the immediate critical path G1 is optimized for access speed while G3 and G4 are optimized for durability. As context grows, KV cache quickly exhausts local storage capacity (G1-G3), while pushing it down to enterprise storage (G4), which introduces unacceptable overheads and drives up both cost and power consumption.

Can the new BlueField‑4 CMX platform keep pace with exploding context demands? NVIDIA's design pairs the Dynamo orchestration framework with a three‑tier storage hierarchy—G1 GPU HBM for hot KV, G2 system RAM for staging, and G3 local SSDs for warm data. By offloading less‑time‑critical KV to slower tiers, the system aims to stretch the limited HBM capacity that traditionally bottlenecks large‑scale agents.

The architecture assumes that agents will benefit from persistent long‑term memory across turns, tools, and sessions, allowing reasoning to build rather than restart. However, the article does not disclose performance metrics or latency figures for the G2 and G3 paths, leaving open the question of whether the added complexity introduces overhead that offsets the intended gains. The reliance on Dynamo to coordinate movement between tiers suggests a software layer that must scale in lockstep with model size, a requirement that remains unproven in practice.

In short, the BlueField‑4‑powered CMX platform presents a structured approach to context management, but its effectiveness for trillion‑parameter models and multi‑million‑token windows is still uncertain.

Further Reading

- Product Hunt - AI Tools - Product Hunt

- There's An AI For That - TAAFT

Common Questions Answered

How does NVIDIA's BlueField-4 CMX platform optimize key-value pair retrieval for generative models?

The platform uses a three-tier memory architecture with distinct storage layers optimized for different access patterns. By splitting data across GPU HBM (G1), system RAM (G2), and local SSDs (G3), the system can manage context more efficiently and reduce latency during token generation.

What role does NVIDIA Dynamo play in managing context across different memory tiers?

NVIDIA Dynamo serves as an orchestration framework that helps AI infrastructure teams manage key-value pairs across multiple storage tiers. It enables intelligent data placement, moving less critical data to slower storage while keeping hot, latency-critical data in high-speed GPU HBM.

What are the specific characteristics of the G1, G2, and G3 memory tiers in the BlueField-4 CMX platform?

G1 (GPU HBM) is designed for hot, latency-critical key-value pairs used in active generation with nanosecond access times. G2 (system RAM) provides a buffer for staging data off HBM, while G3 (local SSDs) stores warm key-value pairs that are reused over shorter timescales but are limited by single-node constraints.